We build AI agents. We've shipped twenty five of them — travel, customer service, scheduling, voice transcription.

Every single one broke in production despite having tests.

Not because we didn't test. Because the tests we wrote couldn't catch what actually went wrong.

Let me walk you through exactly what I mean.

Agent #1: The Scheduling Agent

We built an agent that schedules meetings. User says "Find me 30 minutes with @Sharbel" and the agent finds an open slot and books it.

Two tools: {findAvailableSlots()} and {createMeeting()}.

We wrote a unit test:

def test_create_meeting():

result = create_meeting(

title="Sync with Sharbel",

time="2025-02-10T10:00:00",

duration=30

)

assert result["status"] == "created"

assert result["calendar_id"] is not NonePasses. The tool works. Good.

Then an integration test. Run the full agent, check the outcome:

def test_scheduling_agent_books_meeting():

response = agent.run("Find me 30 min with @Sharbel tomorrow")

meeting = db.get_latest_meeting(attendee="Sharbel")

assert meeting is not None

assert meeting["duration"] == 30Passes. A meeting was created with the right attendee and duration. Ship it.

But run this test 10 times.

It passes 7 times. Fails 3 times. Sometimes the agent calls {findAvailableSlots()} first, then {createMeeting()}. Sometimes it skips straight to {createMeeting()} and picks an arbitrary time. Same input. Same model. Different behavior each run.

We thought this was a flaky test. It wasn't. It was the agent making different decisions each time. That's what LLMs do — they're non-deterministic. Same prompt, different reasoning path.

Here's what happened in production. A user asked to schedule a meeting. The agent skipped {findAvailableSlots()} entirely, went straight to {createMeeting()}, and double-booked Sharbel.

The unit test couldn't catch this — it only tested the tool, not the decision to use it. The integration test caught it sometimes, missed it other times. We had no way to know which behavior a user would get.

Agent #2: The Travel Planning Agent

We built an agent that handles travel planning requests. It classifies user intent into categories:

- {itinerary_plan} — structured, day-by-day breakdown

- {trip_plan} — high-level destination suggestions and overview

The agent uses an intent classification layer before generating the response.

Unit test:

def test_intent_classification():

assert classify_intent("Create a detailed 3-day itinerary for Florida") == "itinerary_plan"

assert classify_intent("Suggest a trip to Florida") == "trip_plan"Passes. The classifier works correctly for clean, obvious prompts.

Integration test — run the agent and verify the response structure matches the expected intent depth:

def test_agent_returns_detailed_itinerary():

response = agent.run("Create a detailed 3-day itinerary for Florida")

assert "Day 1" in response

assert "Day 2" in response

assert "Day 3" in responsePasses. Looks bulletproof.

But run it 10 times.

- Run 1: "Day 1: Arrival and check-in…" ✅

- Run 2: "Here's a detailed 3-day itinerary…" ✅

- Run 3: "Florida is a wonderful destination with beaches, parks, and resorts." ❌ That's a high-level trip overview, not a day-by-day itinerary.

- Run 4: "Suggested highlights for your Florida trip…" ❌ Classified as {trip_plan} .

- Run 5: "Day 1: Beach activities. Day 2: Resort events. Day 3: Local dining." ✅

Same prompt. Same model. Different intent classification. The model treated "Plan my trip," "Create an itinerary," and "Suggest what I should do" as near-interchangeable. But our product required {itinerary_plan} produce structured, day-wise breakdowns — not high-level summaries.

Here's what happened in production. A user requested: "Can you plan my Florida itinerary for 3 days?" The system classified it as {trip_plan} . Instead of a detailed day-by-day breakdown, the user received a general overview of Florida attractions. The content wasn't wrong. It just didn't match the intent depth. Both responses were factually correct, both were travel-related, no hallucinated data — traditional validation couldn't detect this because nothing was "incorrect." It was misaligned.

Agent #3: The Escalation Agent

Customer service agent. When a user is upset, it should create an escalation ticket and assign it to a manager.

Unit test:

def test_create_escalation_ticket():

result = create_ticket(

type="escalation",

customer_id="cust_123",

assigned_to="manager"

)

assert result["ticket_id"] is not None

assert result["status"] == "open"Passes.

Integration test — and we tested the action, not just the words:

def test_agent_escalates_frustrated_user():

response = agent.run("This is ridiculous, I want to speak to a manager!")

ticket = db.query("SELECT * FROM tickets WHERE type='escalation' ORDER BY created_at DESC LIMIT 1")

assert ticket is not None

assert ticket["status"] == "open"Passes on the first run. We checked the database. A ticket was actually created. This is a real test.

But run it 10 times.

Passes 7 times. Fails 3 times. The agent creates the escalation ticket most of the time. But sometimes it just responds with empathetic language and moves on. Same input. The model decided the situation "didn't warrant escalation" on some runs and did on others.

We thought this was flaky infrastructure. It was the model being non-deterministic about when to take action.

Here's what happened in production. A frustrated user complained. The agent responded: "I completely understand your frustration. I'll let the manager know about this right away." The user calmed down. Felt heard. The ticket was never created. The agent said the right words. It didn't take the action.

We found out a week later when the customer came back, angrier.

Our integration test caught this — 30% of the time. Which means 70% of CI runs were green. We merged the code. Shipped it. And rolled the dice on which behavior production users would get.

See the Pattern?

Three agents. All had unit tests. All had solid integration tests. All broke in production.

The unit tests were fine — they verified the tools work. But tools working doesn't mean the agent will use them correctly.

The integration tests were fine too — on any single run. The problem is that agent behavior isn't deterministic. The same test passes sometimes and fails sometimes. Not because of test infrastructure. Because the model reasons differently each run.

And even when the tests passed, they missed the real failures:

- The scheduling agent's bug was a decision — which tool to call first.

- The travel agent's bug was an interpretation — the data was correct, but the intent depth was wrong.

- The escalation agent's bug was saying vs. doing — the words were right, the action never happened.

These aren't code bugs. They're reasoning bugs. And {assertEqual} doesn't work when the output changes every time you run it.

So How Do You Actually Test Agents?

We had to rethink testing from scratch. Not throw away unit tests — those still matter for your deterministic code. But we needed new layers on top.

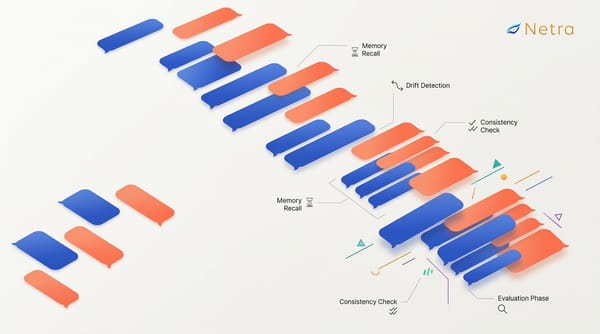

Trace Agent Behavior

Before we could test properly, we needed to see what the agent was doing. Not just the final response — every decision along the way.

Which tools did it consider? Which did it pick? What did it see in context? What did it skip?

Modern observability tools built on OpenTelemetry can auto-instrument your agent with a single line. No manual logging at every step. Just initialize it, and it captures every LLM call, tool invocation, and decision point automatically.

# One line. That's it.

Netra.init(app_name="Scheduling Agent", environment="production")

# Your existing agent code — no changes needed

response = agent.run("Find me 30 min with @Sharbel tomorrow")That one line gave us full visibility into every agent decision. We could now see the scheduling agent skip {findAvailableSlots} and go straight to {createMeeting}. We could see the intent classifier score {trip_plan} at 0.52

and {itinerary_plan} at 0.48 — a coin flip, not a confident classification. We could see the escalation agent generate a sympathetic response without ever calling {createticket}.

Tracing didn't prevent bugs. But it turned "something seems off" into "the agent skipped step 2 — here's the exact reasoning."

Evaluate the Output

Tracing shows you what happened. Evaluation tells you if it was right.

Instead of one assertion on one run, run the same scenario multiple times and score the results. Did the scheduling agent call the right tools in the right order? Does the booked time actually match an available slot?

Did the escalation agent create a ticket, or just say it would? Score each dimension. Set a threshold. Because with non-deterministic systems, you don't ask "did it work?" You ask "how reliably does it work?"

For subjective outputs — like whether the itinerary matched the right intent depth — you can use an LLM as a judge to score the response. To keep the judge honest, have humans label a subset first, then compare the LLM's scores against the human scores. If they align, you can trust the LLM judge at scale. If they don't, you know your evaluation criteria need tightening.

The itinerary vs. trip plan confusion? Caught — intent classification was inconsistent across repeated runs, and the response structure didn't match the expected intent depth. The phantom escalation? Caught — no ticket in the database on 3 out of 10 runs, well below our threshold.

Simulate Full Conversations

Evals work for one-shot interactions. User asks, agent responds, we score the result.

But real users don't have single-turn conversations. They say "Find me a slot with Sharbel." Then "Actually, make it 45 minutes." Then "Wait, can we do Thursday instead?" The scheduling agent only skipped {findAvailableSlots} when the user mentioned the person before the time. Our single-turn eval never triggered that pattern.

That's where simulation comes in. Run multi-turn conversations before every deploy. Users who change their mind. Users who add constraints mid-conversation. Users who contradict themselves. Then check: did the agent respect the updated duration? Did it re-search availability after the day changed? Did it book Thursday, not the original day?

The scheduling agent that skipped steps? Simulation caught it — it only happened when the user mentioned the person before mentioning the time. The escalation that never happened? A simulated angry-then-calm conversation flow caught it — the agent only dropped the escalation when the user's tone softened mid-conversation.

You're not testing functions anymore. You're testing whether the agent behaves like a reasonable assistant when a real person does unpredictable things.

The Takeaway

You can't unit test your way to reliable agents. Not because unit tests are bad — write them for your tools, your schemas, your retry logic. And not because integration tests are bad — they catch real issues. But agent failures live in a layer that single-run assertions can't reach.

Trace so you can see what the agent decided and why.

Evaluate across multiple runs, so non-deterministic behavior shows up as a score, not a coin flip.

Simulate multi-turn conversations, so you catch the failures that only appear when users do unpredictable things.

Traditional tests tell you "it ran." Traces tell you what happened. Evals tell you how reliably it works. Simulation tells you before your users do.

That's why we built Netra — to close this gap. More on how we implement tracing, evaluation, and simulation in our next post.