Highlights

- Most eval frameworks are built around a single turn. They tell you whether a response looked right. They can't tell you whether a conversation actually worked.

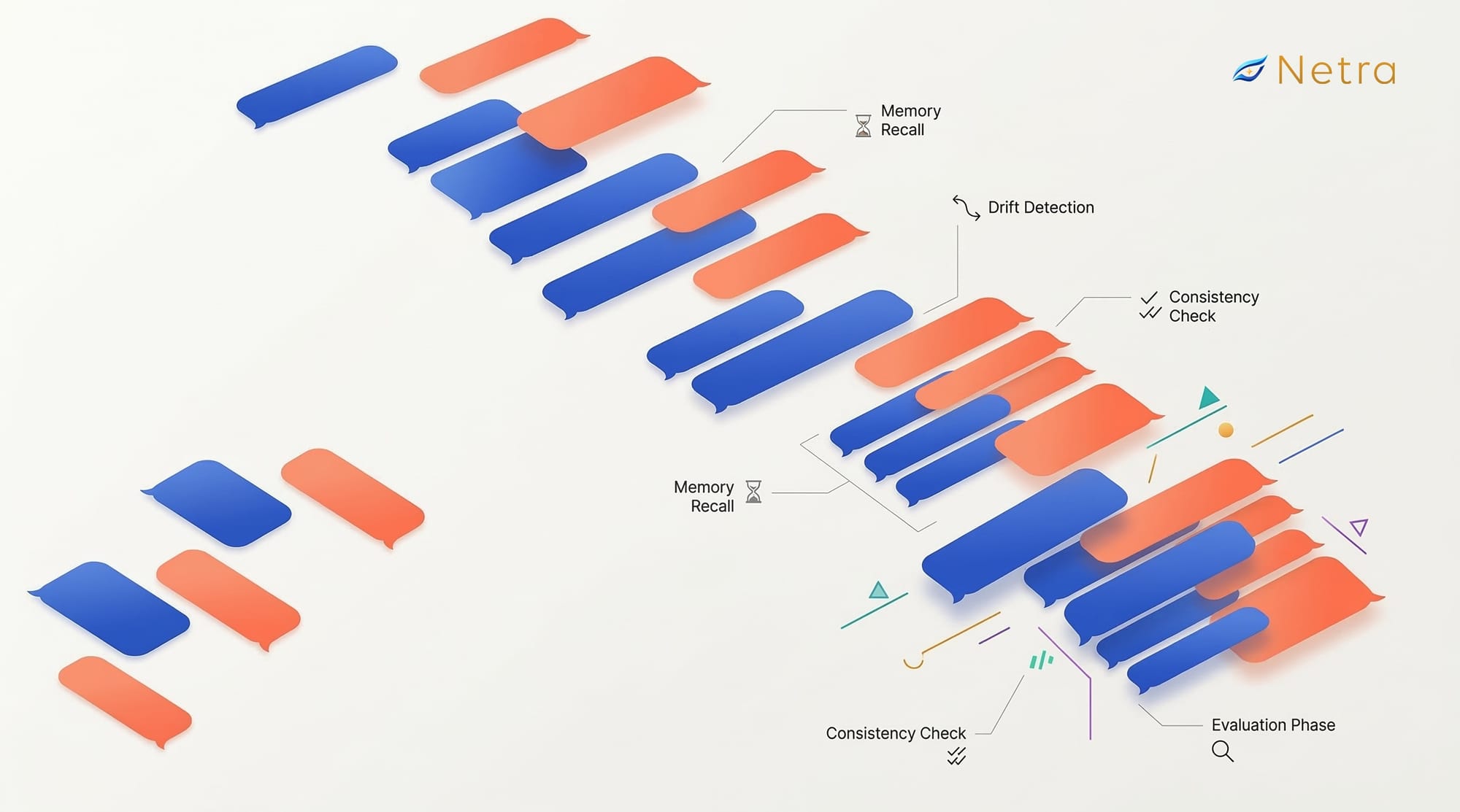

- Multi-turn conversations introduce failure modes that don't exist at the turn level: context drift, contradiction handling, mid-conversation persona switches, and memory failures that only surface after turn 10 or 15.

- The unit of evaluation changes everything. Scoring individual responses is proofreading. Scoring the full conversation is actually reading the story.

- Conversation-level metrics - Goal Fulfillment, Conversation Memory, Guideline Adherence - move independently of each other. High flow with low fulfillment is its own failure mode, and a single aggregate score would hide it entirely.

- Turn-level scores are optimistic by nature. Agents that looked perfectly shippable on per-turn scoring told a very different story when evaluated across full conversation runs - Goal Fulfillment and Conversation Memory dropped significantly, revealing problems that turn-level evals had been quietly masking all along.

We had to build a role-play agent for a demo in 5 days. So we did what any team under pressure does - gathered the requirements, vibe-coded our way through it, and boom, the demo was a success.

But then came the harder part: stabilising it and actually building on top of it.

A bit of context on what we'd built. It was a sales training tool. The idea was simple - before a sales rep gets on a real cold call, they practice with our role-play agent first. The agent plays the prospect: the person on the other end of the phone who didn't ask to be called, has their own mood and context, and may or may not want to talk. The sales rep has to navigate that. The role-play agent has to feel real enough to be worth practising against. So we built personas with difficulty levels, emotional states, background context, behavioural quirks - the works.

Getting it working was one problem. Knowing whether it was actually good - that was a completely different one.

Now, what even is a stable agent? It's a genuinely tricky question when something can generate 10 different outputs across 10 identical runs. So we did what every AI engineer does - set benchmarks, wrote evaluations, committed to letting numbers tell us whether v2 was actually better than v1, not just vibes and random test runs.

But this time, the evaluation felt different. And it took us a while to understand why.

The Problem With How We'd Always Done Evals

We'd done evaluations before. Tool call accuracy, response quality, latency under load. For most agents, the pattern is familiar - give the model an input, get an output, score it. Most frameworks work this way. RAGAS is built around per-query retrieval quality. LangSmith gives you excellent turn-level tracing. DeepEval has solid per-response metrics.

All of them are great at answering: did this response look right?

None of them are built to answer: did this conversation actually work?

And for an AI agent simulation playing a human prospect across a 15-turn cold call, that second question is the only one that matters. A prospect who is rude in turn 2 but suddenly warm in turn 8 for no reason - that's a broken role-play agent. A prospect who forgets they already gave their budget constraint three turns ago - also broken. A prospect who stays perfectly consistent turn-by-turn but never actually feels like a human - completely useless for training.

None of these failures show up in turn-level evals. Every individual response can look fine. The conversation as a whole is still a mess.

So What Is Multi-Turn, Exactly?

Before getting into what breaks, it's worth being precise about what multi-turn actually means. A multi-turn conversation is any interaction where the agent needs to carry context across multiple exchanges - where what was said in turn 3 should still matter in turn 15. It's not a series of independent prompts. It's a single, evolving thread. And that changes everything about how you evaluate it.

What Simulating Multi-Turn Conversations Actually Catches

This is where things got interesting. To properly test the role-play agent, we needed something that could actually runfull conversations against it - not just probe it turn by turn. That's when we started using Netra, which does exactly this: it simulates a complete multi-turn conversation with your agent, end to end, and then evaluates the entire interaction as a whole.

Once we started running these full conversation simulations, we started seeing failure modes we hadn't even thought to look for.

Context drift - The role-play agent starts the conversation following its persona correctly. By turn 8, it's subtly shifted. By turn 12, it's behaving like a completely different kind of prospect than the one defined. No single turn looks obviously wrong. But the cumulative drift makes the agent useless for training, because the sales rep is now practising against an inconsistent character, not a realistic one.

Contradiction handling - Real prospects change their position mid-conversation - that's just how people work. Someone says they're not interested, then asks a clarifying question that suggests they actually are. Does the role-play agent track that shift and respond accordingly? Or does it keep acting disinterested regardless? Turn-level evals never catch this because they don't score how the agent handles its own history.

Mid-conversation persona switches - We'd define a prospect persona - say, a skeptical mid-level manager at a logistics company. Three turns in, the role-play agent would subtly start responding like a different kind of person entirely. Not obviously wrong, just... off. The kind of thing a sales rep would notice and find disorienting, but that looks fine on any individual turn score.

Memory failures under load - The role-play agent remembers what the sales rep said in turn 2 when you're scoring turn 3. But does it still factor that in at turn 18, after the conversation has taken five different directions? In our testing, memory reliability dropped noticeably in longer conversations - not because the model couldn't remember, but because nothing was ensuring it actually did.

None of these showed up until we had a way to run and score the full conversation. That's the gap Netra's simulation fills.

Conversation-Level Metrics for AI Agent Monitoring

Once the failure modes were clear, the next question was obvious: how do you actually measure this? Turn-level scores weren't going to cut it - we needed metrics that treated the conversation as the unit of evaluation, not the individual response.

Since Netra was already running the simulations, using it for scoring too was a natural fit. Here are the metrics we focused on:

Guideline Adherence - Did the role-play agent follow its persona instructions throughout the entire conversation, not just at the start? This is where context drift shows up in the numbers.

Conversation Completeness - Did the agent address everything the sales rep brought up? A role-play agent that ignores half of what the rep says is training them to expect a conversation that doesn't exist.

Conversation Flow - Did the conversation hold together logically from start to finish? Did the prospect's responses stay consistent with the character and context that had built up?

Conversation Memory - Did the agent correctly use information that came up earlier in the conversation? If the rep mentioned a specific pain point in turn 4, did the prospect's behaviour reflect that in turn 14?

Factual Accuracy - Were the prospect's claims consistent with their defined background? A prospect who says they "never use third-party vendors" in turn 3 and then asks for a vendor recommendation in turn 11 has broken the simulation.

Goal Fulfillment - Did the conversation reach its intended outcome - whether that's a completed practice run, a natural close, or a realistic rejection? This is the north star.

Information Elicitation - Did the role-play agent reveal information at the right pace, the way a real prospect would? Or did it dump everything upfront, or withhold so much that the rep had nothing to work with?

One thing worth calling out: these metrics don't always move together, and that's actually useful. We had conversations where Flow scored high - the dialogue felt coherent - but Goal Fulfilment was low because the role-play agent never created a realistic enough challenge for the rep to navigate. High flow, low fulfillment is its own failure mode. Tracking them separately is what lets you see that.

What the Numbers Actually Looked Like

Before we started using Netra's multi-agent simulation, our per-turn scores looked reasonable - good enough that we might have shipped it.

When we ran the same agent through full conversation simulations, the picture changed completely. Goal Fulfilment and Conversation Memory dropped significantly on longer runs. Guideline Adherence, which looked solid turn-by-turn, told a very different story when scored across the full arc of a conversation.

The turn-level scores had been masking real problems. The role-play agent felt fine in short bursts and fell apart over a full call - which is exactly the scenario that matters for training.

What This Actually Changes

Once you're evaluating at the conversation level, you stop asking "does this response sound right" and start asking "does this agent hold up over time." Those are different questions, and they require different things from your prompts, your context management, and how you think about persona design.

You also get much more specific about where things break. Is the drift a prompt issue? A context window problem? A memory failure? Conversation-level agent monitoring gives you a starting point for that diagnosis that turn-level scores simply can't.

And perhaps most importantly - you stop shipping agents that look good in isolated testing and fall apart the moment a real conversation gets long or complicated. Because you've finally built an eval that resembles what production actually looks like.

Multi-turn conversations aren't a nice-to-have. They're the whole product. Your evals should be too because single-turn evals see the sentences, multi-turn simulation reads the story.

Building a conversational agent that actually holds up over a full conversation is hard enough. Evaluating it shouldn't slow you down further. If you want to skip the eval infrastructure and get straight to improving your agent's quality, check out Netra.

FAQs

1. What is a multi-turn AI agent conversation?

A multi-turn conversation is any interaction where the agent needs to carry context across multiple exchanges - where what was said in turn 3 should still matter in turn 15. It's not a series of independent prompts. It's a single, evolving thread, and that changes everything about how you evaluate it.

2. How is multi-turn evaluation different from single-turn evaluation?

Single-turn evaluation scores each response in isolation. Multi-turn evaluation scores the conversation as a whole - tracking whether the agent stayed consistent, remembered earlier context, and actually achieved its goal. An agent can score well turn-by-turn and still fail completely at the conversation level.

3. What failure modes only appear in multi-turn conversation?

Context drift (the agent gradually deviating from its instructions), contradiction handling (failing to track when a user changes their position), mid-conversation persona switches, and memory failures under load - where the agent stops using information the user shared earlier in the conversation.

4. What is LLM-as-judge and how is it used in multi-turn evaluation?

LLM-as-judge is an evaluation approach where a language model scores another model's outputs using a structured rubric. In multi-turn evaluation, the full conversation transcript is passed to the judge model, which scores dimensions like Conversation Flow, Goal Fulfillment, and Guideline Adherence across the entire conversation - not just one turn at a time.

5. What metrics matter most for evaluating a conversational AI agent?

The most important conversation-level metrics are Goal Fulfillment (did the agent accomplish what it was supposed to?), Conversation Memory (did it retain and use information from earlier turns?), and Guideline Adherence (did it follow its instructions throughout?). These tell you far more than per-turn accuracy scores.

6. How do I know if my conversational agent is ready for production?

If you're only evaluating it turn-by-turn, you don't. Full conversation simulation with metrics like Goal Fulfillment, Conversation Memory, and Guideline Adherence scored across the complete interaction is the only reliable way to know whether your agent holds up over a real conversation.